TL;DR:

- Building production-grade generative AI requires integrated toolchains, evaluation, and customization.

- Defining clear business problems and success metrics is crucial before development begins.

- Continuous monitoring, evaluation, and ethics considerations are key to scalable, trustworthy AI applications.

Generative AI is no longer a research curiosity sitting behind a paywall. It's a product capability that startups and enterprise teams are racing to ship. But there's a wide gap between running a demo on a pre-trained model and delivering a production-ready application that actually serves your users. The production-grade AI development process involves integrated toolchains, prompt engineering, model selection, customization, and rigorous evaluation — all working together. This guide breaks that process into clear, practical steps so you can move from concept to deployment without losing momentum or burning budget.

Table of Contents

- Clarifying your AI use case and project requirements

- Assembling tools, team, and data for generative AI apps

- Step-by-step development: From prototype to production

- Evaluating, securing, and scaling your generative AI application

- What most leaders get wrong about deploying generative AI apps

- Bring your generative AI vision to life with trusted experts

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Define your AI use case | Start with a real business problem and clear value statement for your generative AI app. |

| Equip your project team | Success depends on cross-functional expertise, robust toolchains, and curated data. |

| Execute focused development | Iterate quickly with prompt engineering and choose models fit for your requirements and scale. |

| Prioritize evaluation and security | Combine automated tools and human review with end-to-end security measures for reliable, scalable AI. |

Clarifying your AI use case and project requirements

Now that you understand the strategic importance of generative AI, let's start at the foundation: mapping out your true business needs before writing a line of code.

The most expensive mistake in AI product development isn't choosing the wrong model. It's solving the wrong problem. Many teams get excited about what a large language model can do and reverse-engineer a use case around it. That's AI solutionism, and it leads to bloated projects with no measurable return. A good AI in software innovation strategy always starts with the business problem, not the technology.

Before you write a single line of code, answer these questions honestly:

- What specific problem does this app solve? Be precise. "Improve customer support" is not a use case. "Reduce first-response time by 40% using AI-generated draft replies" is.

- Why does this require a generative AI model? A good rule of thumb: start with a clear 'why LLM?' to avoid overkill. Sometimes a rule-based system or a simple classifier does the job better and cheaper.

- Who are the end users, and what does success look like for them? Define measurable outcomes: task completion rate, time saved, error reduction.

- What data do you have, and is it usable? AI apps are only as good as the data feeding them.

- What are your compliance and governance obligations? Healthcare, finance, and legal sectors face strict data regulations that must be designed in from day one.

Stakeholder alignment is just as critical as technical planning. If your legal team, data team, and product team aren't aligned on scope and risk tolerance before development starts, you'll face costly rework later. Build a one-page requirements document that captures the problem statement, success metrics, data sources, and known constraints.

Pro Tip: Map your AI use case to a dollar value. If you can't estimate how much time, cost, or revenue is at stake, the business case isn't strong enough to justify the investment yet.

Also factor in AI ethics considerations early. Bias in training data, fairness in outputs, and user privacy are not afterthoughts. They're design requirements. Teams that treat ethics as a checkbox at the end of development consistently face post-launch crises. For context, AI is reshaping small business operations across sectors, which means the bar for responsible deployment is rising fast.

Assembling tools, team, and data for generative AI apps

With your use-case sharpened, it's time to gather the resources and talent needed for implementation.

Building a generative AI app is a cross-functional effort. No single engineer, no matter how skilled, can carry it alone. You need a team that covers the full stack of concerns: model behavior, infrastructure, product experience, and quality assurance.

| Role | Responsibility |

|---|---|

| ML engineer | Model selection, fine-tuning, evaluation |

| Software developer | API integration, backend logic, frontend |

| Product lead | Use-case definition, roadmap, user feedback |

| QA engineer | Testing, edge case identification, regression |

| Cloud/DevOps engineer | Deployment, CI/CD pipelines, scaling |

| Data engineer | Data collection, cleaning, pipeline management |

Beyond people, your toolchain matters enormously. Integrated toolchains with CI/CD and observability are essential for modern AI apps that need to evolve continuously without breaking in production. Key tools to evaluate include MLflow for experiment tracking, LangChain or LlamaIndex for LLM orchestration, and cloud ML platforms like AWS SageMaker, Google Vertex AI, or Azure ML.

Data preparation is where most projects quietly fall apart. Here's what to prioritize:

- Relevance: Your training or retrieval data must closely match real-world user queries and contexts.

- Quality over quantity: A smaller, well-labeled dataset outperforms a massive, noisy one every time.

- Licensing and consent: Ensure you have legal rights to use the data, especially if it comes from third-party sources.

- Privacy compliance: Anonymize personally identifiable information before it enters any pipeline.

For teams building scalable AI development environments, infrastructure decisions made now will determine how easily you can retrain models, add new features, and handle traffic spikes at launch. If you're also considering mobile apps with AI, plan your API architecture to support both web and mobile clients from the start. Security and privacy requirements should be baked into your data pipeline design, not patched in afterward.

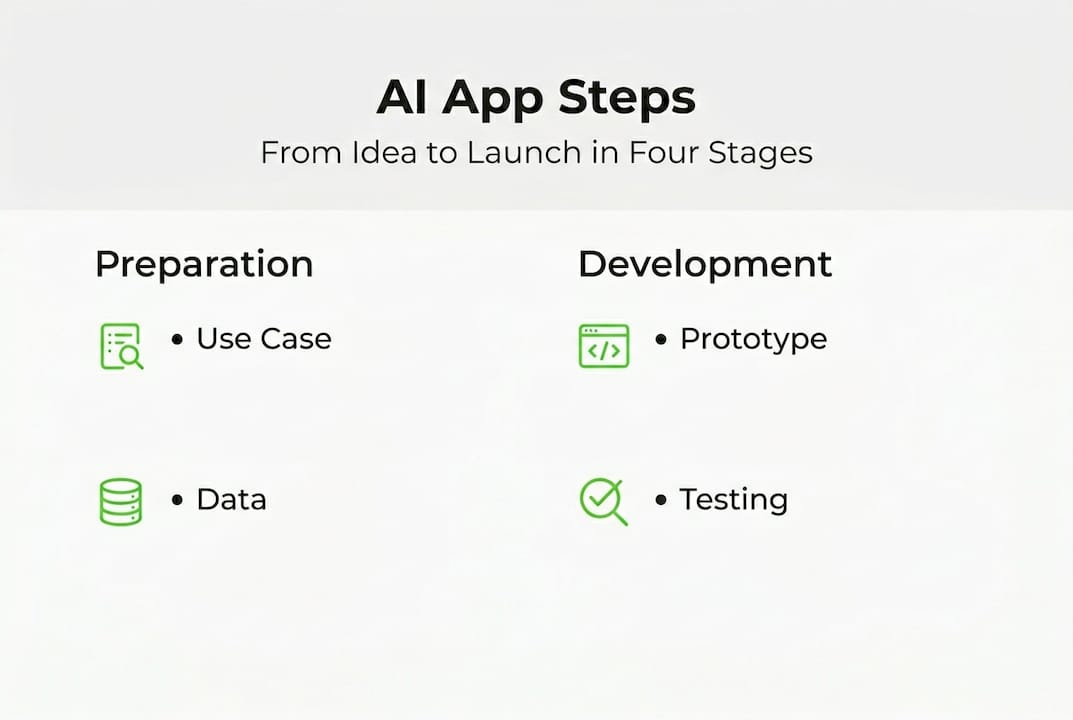

Step-by-step development: From prototype to production

Once your team and tools are in place, it's time to build, test, and refine your generative AI app.

The fastest path to learning is building a rough prototype quickly. Don't wait until you have the perfect model or the cleanest dataset. Start with what you have and iterate.

- Define your prompt strategy. Prompt engineering is your first lever. Write clear, structured prompts that constrain the model's behavior and guide it toward your desired output format. Test variations systematically.

- Select a base model. Compare options across size, latency, cost, and domain fit. Open-source models like Llama or Mistral work well for cost-sensitive projects. Proprietary APIs like GPT-4o or Claude offer stronger out-of-the-box performance for complex tasks.

- Customize for accuracy. Generic models rarely meet production standards. Use RAG, grounding, and tuning techniques to improve accuracy. RAG (Retrieval-Augmented Generation) lets your model pull from a live knowledge base. Fine-tuning adjusts model weights using your domain-specific data. RLHF (Reinforcement Learning from Human Feedback) aligns outputs with user preferences.

- Integrate via APIs. Connect your model to your existing application stack through well-documented API endpoints. Keep the model layer loosely coupled so you can swap models without rebuilding your entire app.

- Test for edge cases. Don't just test happy paths. Deliberately probe for failure modes: hallucinations, off-topic responses, prompt injection attempts, and performance under load.

"The teams that ship the best AI products aren't the ones with the biggest models. They're the ones who iterate fastest on evaluation and feedback."

A useful comparison when selecting your model approach:

| Approach | Best for | Trade-off |

|---|---|---|

| Prompt engineering only | Fast prototyping, general tasks | Lower accuracy on specialized tasks |

| RAG | Knowledge-intensive apps | Requires retrieval infrastructure |

| Fine-tuning | Domain-specific outputs | Higher cost, longer setup time |

| RLHF | Alignment with user preferences | Needs labeled feedback data |

Pro Tip: Start with prompt engineering and RAG before committing to fine-tuning. You'll get 80% of the accuracy gains at 20% of the cost and time investment.

For teams exploring AI agent examples, agentic architectures add another layer of complexity. Agents that call external tools or make multi-step decisions require careful orchestration and failure handling. Keep your first version simple. The future of AI is agentic, but production stability comes first.

Evaluating, securing, and scaling your generative AI application

With your app built, the next step is making sure it's safe, effective, and ready to scale under real-world conditions.

Deployment is not the finish line. It's the starting gun for a new phase of work. Production AI apps require continuous evaluation, active security monitoring, and infrastructure that can grow with demand.

Here's what a robust post-launch operations framework looks like:

- Metrics-based evaluation: Use MLflow or similar tools to track output quality, latency, and error rates across model versions. Set thresholds that trigger alerts when quality drops.

- Human feedback loops: Build mechanisms for users to flag bad outputs. This data is gold for retraining and prompt refinement.

- Observability: Instrument your app with logging and tracing so you can diagnose failures quickly. Blind spots in production AI are dangerous.

- CI/CD pipelines: Automate testing and deployment so model updates don't require manual intervention or cause downtime.

- Security scanning at generation time: LLMOps best practices include integrating security scanning at the generation point to catch harmful, biased, or sensitive outputs before they reach users.

- Smart routing: As your app scales, route requests to different model sizes based on complexity and cost requirements. Simple queries don't need your most expensive model.

For teams building enterprise-scale AI solutions, resource monitoring becomes critical at scale. Plan for model retraining cycles, version control, and rollback capabilities before you need them. A system that works for 1,000 users will behave very differently at 100,000. Build that headroom into your architecture from the start.

What most leaders get wrong about deploying generative AI apps

Let's step back and consider why so many well-funded generative AI app initiatives quietly miss expectations, and what leaders can do differently.

Here's the uncomfortable truth: technology is almost never the bottleneck. The teams that struggle most with generative AI deployment are the ones that underinvest in change management, scope discipline, and business value measurement. They ship a model, celebrate the launch, and then discover that users don't trust the outputs, internal teams don't know how to work with AI-generated content, and no one is tracking whether the app actually moves the needle.

Evaluation and security are the two areas most commonly deprioritized after launch. Product teams assume the model will hold up. It won't, not without active monitoring and feedback integration. AI ethics in production is a continuous practice, not a one-time audit.

The teams that consistently win with generative AI treat it as a cross-disciplinary product, not an ML experiment. They keep scope tight, measure relentlessly, and build feedback loops that make the system smarter over time. Solo ML wizardry doesn't scale. Collaborative, process-driven development does.

Bring your generative AI vision to life with trusted experts

Ready to build your own generative AI-powered solution? Here's how Proud Lion Studios can help you achieve it.

At Proud Lion Studios, we've taken generative AI from concept to production for startups and enterprise clients across multiple industries. Our work on Mira AI is a strong example of what's possible when AI, product thinking, and solid engineering come together. We also built the Pride AI Estimator to help teams scope and cost AI projects with confidence before committing to development.

Whether you need a full AI product build, model integration support, or a technical partner to de-risk your roadmap, our UAE-based team brings the expertise to move fast without cutting corners. We also offer blockchain development services for teams building at the intersection of AI and Web3. Let's build something that actually works.

Frequently asked questions

What technologies are commonly used in generative AI app development?

Core technologies include large language models, CI/CD platforms, MLflow for evaluation, and techniques like RAG and prompt engineering to customize and improve output quality.

How do you evaluate the success of a generative AI app?

Use both automated metrics via MLflow and structured human feedback loops to assess output quality, reliability, and alignment with real user needs.

What security measures are critical for generative AI applications?

Integrate security scanning at the generation point and maintain continuous observability to detect misuse, harmful outputs, and performance degradation before they impact users.

How does prompt engineering impact app performance?

Effective prompt engineering accelerates prototyping and directly improves the accuracy and usability of LLM-based applications, often delivering strong results before any fine-tuning is needed.