Developers expected AI coding tools to supercharge their productivity by 24%, but reality delivered a shock: experienced coders actually slowed down by 19% when using early AI assistants. This gap between hype and performance reveals AI's complex role in modern software development. For tech leaders and developers exploring AI integration, understanding what AI can genuinely deliver versus what it promises is critical for smart adoption.

Table of Contents

- Understanding The Current Capabilities And Limitations Of AI In Software Development

- The Productivity Paradox: Why AI Tools Sometimes Slow Developers Down

- AI Adoption And Market Growth: Startup Trends And Tool Popularity In 2026

- Emerging AI Development Frameworks: Human Orchestration And Collaboration With AI Agents

- Explore AI-Powered Software Development Solutions With Proud Lion Studios

- Frequently Asked Questions About AI In Software Development

Key takeaways

| Point | Details |

|---|---|

| Current AI coding success rates | Advanced AI models achieve only 23.3% success.pdf) on complex enterprise-level coding tasks. |

| Productivity paradox revealed | Developers experienced 19% slowdowns using early AI tools despite expecting 24% speed increases. |

| Rapid startup adoption | AI generates 25-40% of code at startups by mid-2025, showing widespread integration. |

| Explosive market growth | AI coding tools market reached $7.37 billion in 2025, projected to hit $25 billion by 2030. |

| Structured collaboration frameworks | New frameworks like Agentsway enable human orchestration of specialized AI agents for better collaboration. |

Understanding the current capabilities and limitations of AI in software development

AI coding models face a reality check when tackling complex, enterprise-level problems. The SWE-BENCH PRO benchmark tests AI on real-world software challenges that go beyond simple code completion or basic bug fixes. These tasks mirror the messy, interconnected problems developers encounter daily in production environments.

Current coding models achieve 23.3% success rates.pdf) on these advanced tests, with GPT-5 leading the pack. This performance metric tells us something crucial: AI excels at routine tasks but struggles with architectural decisions, complex debugging, and understanding business context. The gap between 23% and the reliability threshold enterprises need remains significant.

"AI coding assistance shows promise for specific tasks, but enterprise software development demands reliability far beyond current model capabilities."

For tech leaders planning AI integration, these numbers matter. You can't replace experienced developers with AI, but you can augment their capabilities strategically. Focus AI tools on code generation for well-defined features, automated testing, and documentation. Reserve complex problem-solving, system design, and critical business logic for human expertise.

This measured approach to the future of artificial intelligence in development acknowledges current limitations while preparing for rapid improvements. The 23% success rate today will climb, but setting realistic expectations prevents costly missteps during adoption.

The productivity paradox: Why AI tools sometimes slow developers down

The disconnect between expectation and reality in AI coding tools reveals uncomfortable truths about early adoption. Developers anticipated 24% productivity gains but measured a 19% slowdown instead. This 43-percentage-point gap wasn't just disappointing; it challenged fundamental assumptions about AI's immediate value.

Several factors drive this productivity paradox:

- Context switching costs: Developers spend mental energy reviewing AI suggestions, evaluating correctness, and fixing subtle bugs introduced by plausible-but-wrong code

- Integration friction: Early tools lacked smooth IDE integration, forcing developers to jump between interfaces and break their flow state

- False confidence: AI-generated code that looks correct but contains logic errors creates debugging time that exceeds any initial time savings

- Adaptation overhead: Learning to prompt AI effectively, understanding its limitations, and building new workflows takes time that initially reduces output

Experienced developers felt the slowdown most acutely because their established workflows clashed with AI tool patterns. Junior developers sometimes saw gains because they lacked ingrained habits to disrupt. This pattern suggests AI tools need better design around expert workflows, not just code generation capability.

Pro Tip: Measure your team's actual productivity metrics before and after AI tool adoption. Track pull request cycle time, bug rates, and developer satisfaction. These hard numbers reveal whether AI delivers value or creates hidden costs in your specific context.

The productivity question ties directly to the ethical implications of AI in workplace deployment. Teams deserve transparency about AI's real impact, not vendor promises. Understanding Artificial Intelligence Development in Dubai and similar regional implementations shows this challenge spans global markets.

AI adoption and market growth: Startup trends and tool popularity in 2026

Startups embraced AI coding tools rapidly despite the productivity paradox, driven by competitive pressure and the promise of shipping faster. By mid-2025, 25-40% of startup code came from AI generation or heavy AI assistance. This adoption rate dwarfs traditional enterprise software rollouts, reflecting both AI's accessibility and startup willingness to experiment.

The AI coding tools market tells a growth story that venture capitalists love. Market valuation hit $7.37 billion in 2025, with projections reaching $25 billion by 2030. That 240% five-year growth reflects both expanding adoption and rising tool sophistication.

| Tool Category | Market Share | Primary Use Case |

|---|---|---|

| GitHub Copilot | 42% | Code completion and generation |

| ChatGPT/Claude | 28% | Problem-solving and architecture |

| Specialized tools | 18% | Domain-specific code generation |

| Enterprise platforms | 12% | Integrated development workflows |

GitHub Copilot commands 42% market share, establishing itself as the default AI coding assistant. Its Microsoft backing and GitHub integration created network effects that competitors struggle to overcome. However, the 28% share held by conversational AI tools like ChatGPT and Claude reveals developers value flexible problem-solving over pure code generation.

Key factors driving adoption include:

- Pressure to ship features faster in competitive markets

- Developer curiosity and desire to experiment with cutting-edge tools

- Management belief that AI adoption signals innovation and modernization

- Genuine value in specific use cases like boilerplate generation and test writing

For tech leaders evaluating AI tools, market share indicates stability and community support, but doesn't guarantee fit for your team's needs. Consider your development workflow, language ecosystem, and team experience level. Successful adoption requires matching tool capabilities to actual pain points, not following trends.

This rapid growth parallels startup growth strategies leveraging technology for competitive advantage. AI coding tools represent infrastructure investment similar to cloud services or CI/CD pipelines.

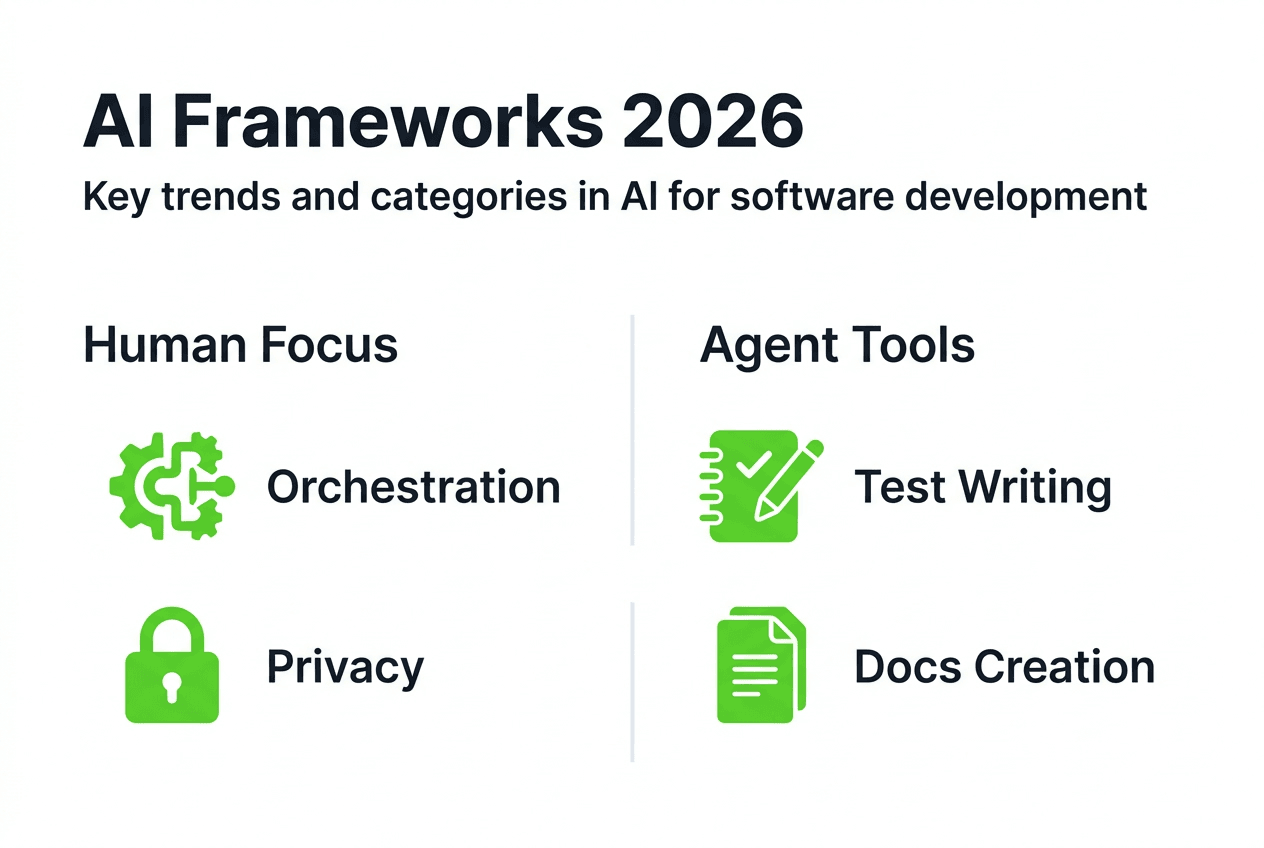

Emerging AI development frameworks: Human orchestration and collaboration with AI agents

The productivity challenges and mixed results from early AI tools sparked innovation in how we structure human-AI collaboration. Agentsway introduces a framework specifically designed for software development that keeps humans in control while leveraging specialized AI agents for distinct tasks. This approach acknowledges AI's limitations while maximizing its strengths.

The framework centers on human orchestration, meaning developers define tasks, delegate to appropriate AI agents, and validate outputs. Unlike autonomous AI coding tools that generate code independently, Agentsway creates a collaborative environment where:

- Human developers break down complex problems into smaller, well-defined tasks suitable for AI assistance

- Specialized AI agents handle specific domains like frontend code, backend logic, database queries, or test generation

- Privacy-preserving collaboration protocols ensure sensitive code and business logic remain protected

- Quality gates require human approval before AI-generated code enters production systems

- Continuous learning loops improve agent performance based on human feedback and corrections

This structured approach solves critical problems plaguing earlier AI tools. By maintaining human control over architecture and business logic while automating routine coding tasks, teams avoid the productivity slowdowns from context switching and error correction. Specialized agents develop deeper expertise in narrow domains compared to generalist models.

Pro Tip: When implementing AI frameworks, start with low-risk tasks like test generation or documentation. Build confidence in AI output quality and team collaboration patterns before expanding to core business logic.

The privacy-preserving aspect matters enormously for enterprises handling sensitive data or proprietary algorithms. Traditional cloud-based AI tools raise concerns about intellectual property exposure. Frameworks enabling on-premises or secure multi-party computation address these risks.

These innovations in AI agents services represent the maturation of AI coding assistance from experimental tools to production-ready systems. Companies like Proud Lion Studios build AI chat base solutions and develop examples of AI agents showing practical implementations.

Frameworks like Agentsway signal a shift from replacing developers to empowering them. This philosophy aligns with AI's realistic capabilities today while preparing for future advances.

Explore AI-powered software development solutions with Proud Lion Studios

Navigating AI integration requires expertise that balances technical capability with business outcomes. You've seen the data: AI tools promise transformation but deliver mixed results without proper implementation strategy.

Proud Lion Studios specializes in AI agents services that bring structured frameworks to your development workflow. Our Dubai-based team implements AI solutions that enhance developer productivity without the slowdowns plaguing early adopters. We focus on mobile app development services that incorporate AI naturally, creating user experiences that feel intelligent without feeling artificial. Our process automations services use AI to eliminate repetitive tasks while keeping humans in control of critical decisions. Whether you're exploring your first AI integration or scaling existing implementations, we deliver tailored solutions backed by real-world results.

Frequently asked questions about AI in software development

What percentage of coding tasks can current AI models handle reliably?

Current AI models successfully complete about 23% of complex, enterprise-level coding challenges based on SWE-BENCH PRO benchmarks. They perform better on routine tasks like code completion, boilerplate generation, and simple bug fixes. For critical business logic and architectural decisions, human expertise remains essential.

Why do AI coding tools sometimes reduce developer productivity?

AI tools can slow developers through context switching costs, reviewing incorrect suggestions, and debugging subtle AI-introduced errors. Early tools lacked smooth workflow integration, forcing developers to jump between interfaces. The adaptation period for learning effective AI collaboration also temporarily reduces output.

How much code in modern startups comes from AI assistance?

By mid-2025, AI generated or heavily assisted with 25-40% of new code at startups. This rapid adoption reflects competitive pressure and experimentation with emerging tools. However, AI-generated code still requires human review, architecture decisions, and integration work.

What frameworks help developers collaborate effectively with AI agents?

Agentsway and similar frameworks introduce structured lifecycles centered on human orchestration. They use specialized AI agents for specific domains while maintaining human control over architecture and quality. Privacy-preserving collaboration protocols protect sensitive code, and continuous learning loops improve agent performance.

How should enterprises evaluate AI coding tools for adoption?

Start by measuring current productivity metrics like pull request cycle time and bug rates. Test AI tools on low-risk tasks first, such as test generation or documentation. Evaluate tools based on your specific language ecosystem, development workflow, and team experience. Focus on solving actual pain points rather than following market trends.

What's driving the explosive growth in AI coding tools market?

The market grew from $7.37 billion in 2025 toward a projected $25 billion by 2030, driven by developer demand, competitive pressure in tech companies, and genuine value in specific use cases. GitHub Copilot's 42% market share and strong venture capital investment fuel continued innovation and adoption.

Recommended

- Build mobile apps with AI, blockchain & game tech in 2026 - Software & Web3 Development in Dubai | Proud Lion Studios

- Build mobile apps with AI, blockchain & game tech in 2026

- The Future of Artificial Intelligence (AI) - Proud Lion Studios

- Mobile apps in tech: startup growth strategies for 2026 - Proud Lion Studios

- Artificial Intelligence Development in Dubai - Web Design Dubai - Website Design Company Dubai | NetSoft

- Rethinking AI in Higher Ed with Dr. David Hatami