TL;DR:

- Structured thinking and early validation are crucial for scaling successful tech products.

- Choosing the right methodology and building strong foundations enhances innovation and reduces risks.

- Monitoring key metrics and addressing technology-specific edge cases ensure long-term product stability.

Most people assume that the most disruptive tech ventures were built in a blur of late nights and gut decisions. The reality is almost the opposite. The products that scale, the platforms that attract enterprise clients, and the AI or blockchain solutions that survive past their first year all share a common thread: structured thinking applied at every stage. Early validation increases launch success by 62%, and that number alone should reframe how you approach your next build. This article walks you through proven frameworks, methodology choices, performance benchmarks, and the edge cases that catch even experienced teams off guard.

Table of Contents

- Essential stages of tech product development

- Choosing the right development methodology: Agile, Lean, Waterfall, or Hybrid?

- Key metrics and performance benchmarks: DORA metrics and beyond

- Addressing edge cases: Specialized pitfalls in AI, blockchain, and mobile

- Perspective: Why foundations, platform and process, drive real tech innovation

- Launch your next tech innovation with expert support

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Follow proven stages | Successful tech product development relies on structured stages adapted to project type. |

| Pick the right methodology | Choosing Agile, Lean, Waterfall, or Hybrid impacts both speed and control for AI, blockchain, or mobile projects. |

| Track key performance metrics | DORA metrics and other benchmarks guide optimization and sustained innovation in tech teams. |

| Plan for specialized challenges | Unique risks in AI, blockchain, and mobile demand focused audits and continuous validation. |

| Foundations enable innovation | Investing early in platform engineering and process maturity is essential for scale and resilience. |

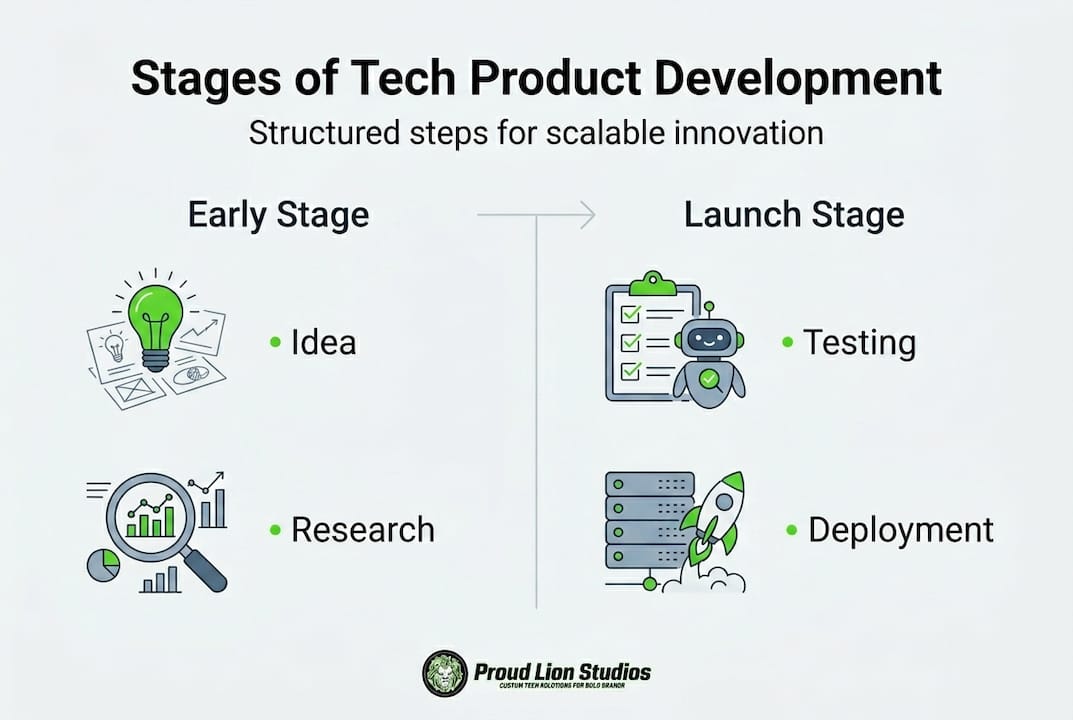

Essential stages of tech product development

Every successful tech product, whether it runs on AI, a blockchain network, or a mobile platform, moves through a recognizable sequence of stages. Skipping or rushing any one of them is where budgets evaporate and launches disappoint.

Tech product development follows structured stages: idea generation and validation, market research, concept and feasibility analysis, design and prototyping, development and engineering, testing and iteration, and finally launch with ongoing optimization. The specifics shift depending on your technology stack. AI products demand early data strategy and model evaluation before a single line of production code is written. Blockchain projects require architecture decisions around consensus mechanisms, smart contract design, and mandatory security audits. Mobile builds need intensive UI/UX focus from day one and rigorous testing across device types and operating system versions.

One statistic that should change how you budget: most disruptive products spend roughly 40% of their total budget post-launch on maintenance, iteration, and optimization. Planning for this from the start is not pessimism, it is accuracy.

Here is how the core stages map across the three technology categories:

| Stage | AI focus | Blockchain focus | Mobile focus |

|---|---|---|---|

| Ideation and validation | Data availability, use case fit | Consensus model, token economics | Target device, OS baseline |

| Prototyping | Model selection, initial training | Contract logic, architecture draft | Wireframes, interaction design |

| Development | Pipeline build, MLOps setup | Smart contract coding, node config | Native or cross-platform build |

| Testing | Model accuracy, bias checks | Security audit, load testing | Device fragmentation, UX testing |

| Post-launch | Drift monitoring, retraining | Contract upgrades, governance | OS updates, OTA delivery |

For startups looking to reduce risk early, tech startup MVP validation is often the most cost-effective first move before committing to full-scale development. Key actions to prioritize across every stage:

- Define your success criteria before writing requirements

- Validate assumptions with real users before prototyping

- Plan your data infrastructure as early as your product architecture

- Schedule security reviews as a phase gate, not an afterthought

- Build post-launch iteration cycles into your original roadmap

Choosing the right development methodology: Agile, Lean, Waterfall, or Hybrid?

Understanding the journey is key, but the way you travel, your methodology, can accelerate or constrain your results. The wrong process choice is one of the quieter killers of otherwise sound product ideas.

Here is a practical breakdown of the four primary approaches:

- Agile works through short iterative sprints with continuous feedback loops. It is the natural fit for AI and mobile projects where requirements evolve as you learn. Its weakness is that it can struggle with fixed regulatory timelines.

- Lean focuses on eliminating waste and reaching a minimum viable product (MVP) as fast as possible. It is ideal for early-stage startups stress-testing product-market fit without burning runway.

- Waterfall follows a strictly sequential flow where each phase completes before the next begins. It suits blockchain projects with stable compliance requirements and predictable deliverables, even if it moves more slowly.

- Hybrid combines Waterfall-style planning with Agile execution. It is the dominant choice for enterprise-scale projects that carry regulatory demands alongside the need for speed.

Agile MVPs launch 40% faster than Waterfall-built equivalents, which matters enormously when you are competing for early adopters or investor attention. However, fast is not always better. A smart contract with a security flaw that went through Agile without a structured audit phase is a liability, not a feature.

| Methodology | Best for | Key advantage | Key risk |

|---|---|---|---|

| Agile | AI, mobile | Speed, adaptability | Scope creep |

| Lean | Early startups | Minimal waste | Under-built product |

| Waterfall | Blockchain compliance | Predictability | Slow to change |

| Hybrid | Enterprise projects | Balance of speed and control | Process complexity |

Enterprise agile vs waterfall decisions often come down to regulatory exposure, team size, and how well-defined the initial requirements really are.

Pro Tip: If your team spans multiple disciplines and compliance functions, a Hybrid approach lets you lock in governance requirements up front while keeping sprint-based flexibility for features and UI iterations.

Key metrics and performance benchmarks: DORA metrics and beyond

With a methodology in hand, knowing how to measure progress is vital. Let's see how the pros do it.

DORA metrics (developed by Google's DevOps Research and Assessment team) are the closest thing the industry has to a universal benchmark for software delivery performance. There are five core metrics to track:

- Deployment Frequency: How often you push to production

- Lead Time for Changes: Time from code commit to production

- Change Failure Rate: Percentage of deployments causing failures

- Mean Time to Recover (MTTR): How fast you restore service after failure

- Rework Rate: Proportion of work that has to be redone

Elite teams benchmark at deployment frequency of multiple times per day, lead time under one hour, change failure rate of 0 to 5%, MTTR under one hour, and rework rate under 10%. Average teams lag significantly behind on all five.

| Metric | Elite benchmark | Average benchmark |

|---|---|---|

| Deployment Frequency | Multiple per day | Once per week or less |

| Lead Time for Changes | Less than 1 hour | 1 to 7 days |

| Change Failure Rate | 0 to 5% | 16 to 30% |

| MTTR | Less than 1 hour | 1 day to 1 week |

| Rework Rate | Under 10% | 25%+ |

On AI development performance, the 2025 DORA findings are clear: AI adoption reaches 90% among high-performing teams with reported 80% productivity gains, but AI amplifies both strengths and weaknesses without strong platform foundations. Teams with solid testing and CI/CD pipelines get faster and more reliable. Teams without them get faster at producing unstable code. Elite organizations see 973x more frequent deploys than average performers. For mobile DORA metrics specifically, the principles transfer directly to release cadence and crash rate management.

Use these metrics to locate your actual bottlenecks:

- High lead time signals slow review or approval processes

- High failure rate points to insufficient automated testing

- Slow MTTR reveals gaps in monitoring or incident response

- High rework rate usually means unclear requirements at the start

Addressing edge cases: Specialized pitfalls in AI, blockchain, and mobile

Even with the right structure and measurement, success with cutting-edge technologies demands mastery of their quirks.

Each technology category carries its own class of high-impact failure modes. Knowing them before you build is far cheaper than discovering them in production.

AI-specific risks: Data drift occurs when your model's training data no longer reflects real-world inputs, quietly degrading performance over time. AI hallucination rates under 5% are the target for production systems, but achieving that requires continuous monitoring and validation loops. Latency is another constraint, with most user-facing AI features requiring response times under two seconds to maintain engagement.

Blockchain-specific risks: Smart contract vulnerabilities account for 70% of blockchain project failures. These are not just theoretical risks. Bugs in contract logic have led to nine-figure losses across DeFi platforms. Third-party audits are not optional here, they are the minimum acceptable standard. Hybrid architectures that split sensitive logic on-chain and handle storage or computation off-chain can reduce both cost and exposure when implemented correctly. For teams working through blockchain edge cases, the audit process should be treated as a phase gate, not a final checkbox.

Mobile-specific risks: Perpetual OS updates from both Android and iOS create ongoing compatibility debt. Device fragmentation means a feature that works perfectly on a flagship phone may break on a mid-range model that represents a large share of your actual user base. Over-the-air (OTA) update mechanisms let you bypass app store review cycles, which is powerful but requires strict governance to avoid shipping broken builds. Blockchain in mobile adds another layer of complexity around wallet management, key storage, and transaction signing on device.

Pro Tip: For blockchain projects, budget at least one dedicated sprint for third-party security audits before any public launch. For mobile, maintain a device testing lab that covers at least the top 10 devices by market share in your target region.

"Success hinges on platform engineering, value stream mapping, testing, and CI/CD before attempting to scale AI or blockchain solutions. Hybrid methodologies that balance efficiency and scalability outperform single-method approaches at enterprise level."

Teams building scalable AI blockchain apps consistently report that the edge cases, not the core features, determine whether a product survives its first year.

Perspective: Why foundations, platform and process, drive real tech innovation

Tackling the quirks of advanced tech exposes a deeper truth about what really creates lasting advantage.

The "move fast and break things" mantra has a shelf life. It might get you to a demo, but it does not get you to scale in AI, blockchain, or mobile. What we have seen working with products across these technology categories is that the teams who move fastest sustainably are the ones who invested earliest in foundations: clean CI/CD pipelines, platform reliability, rigorous testing, and process discipline.

Foundations drive scale: platform engineering, value stream mapping, and CI/CD maturity must precede aggressive AI or blockchain scaling. Hybrid methodologies consistently outperform single-method approaches at enterprise level.

The irony is that building on solid foundations feels slower at first. Teams resist it because it looks like overhead. But an AI model deployed without proper drift monitoring is not a product, it is a countdown clock. A smart contract shipped without an audit is not a launch, it is a liability. Invest early in end-to-end product building infrastructure and your iteration speed will compound over time, while teams that skipped the groundwork spend their sprints putting out fires instead of shipping features.

Launch your next tech innovation with expert support

If you want to turn these best practices into business results, here's where to start.

Building with AI, blockchain, or mobile at scale requires more than a talented team. It requires partners who have navigated the exact failure modes and methodology decisions covered here. Proud Lion Studios works with startups and enterprises from discovery through deployment, applying the structured approaches that actually produce scalable, secure digital products.

Whether you need end-to-end blockchain development solutions for a Web3 platform, production-grade smart contract development with rigorous audit processes built in, or want to scope your next project using our AI-powered project estimator, the team is ready to help you build something that lasts.

Frequently asked questions

How long does tech product development take for AI, blockchain, or mobile solutions?

Agile MVPs launch 40% faster than Waterfall-built alternatives, with average development cycles ranging from 2 to 9 months depending on complexity, compliance requirements, and team structure.

Which methodology is best for a startup building an AI-powered app?

Agile or Lean are the strongest fits because they support rapid prototyping and continuous feedback. Agile's iterative sprints make it especially effective for AI projects where requirements shift as models are trained and tested.

What are DORA metrics and why do they matter in tech product development?

DORA metrics are industry benchmarks for software delivery performance covering deployment frequency, lead time, failure rate, and recovery speed. Elite benchmarks help teams identify gaps and accelerate delivery without sacrificing stability.

How do you mitigate smart contract risks in blockchain development?

Third-party security audits are the non-negotiable baseline. Smart contract vulnerabilities cause 70% of blockchain project failures, and no amount of internal review fully substitutes for an independent audit before public launch.

What causes project failures in cutting-edge tech product development?

The most common causes are skipped early validation, weak platform and process foundations, and underestimating technology-specific edge cases. Platform engineering and CI/CD maturity are the strongest predictors of long-term product success.