TL;DR:

- Custom AI agents should focus on automating high-frequency, rule-heavy, and error-prone tasks with clear goals.

- An 'agentish' design with bounded autonomy and human checkpoints enhances reliability and governance.

- Ongoing monitoring, phased scaling, and strict guardrails are vital for successful long-term deployment.

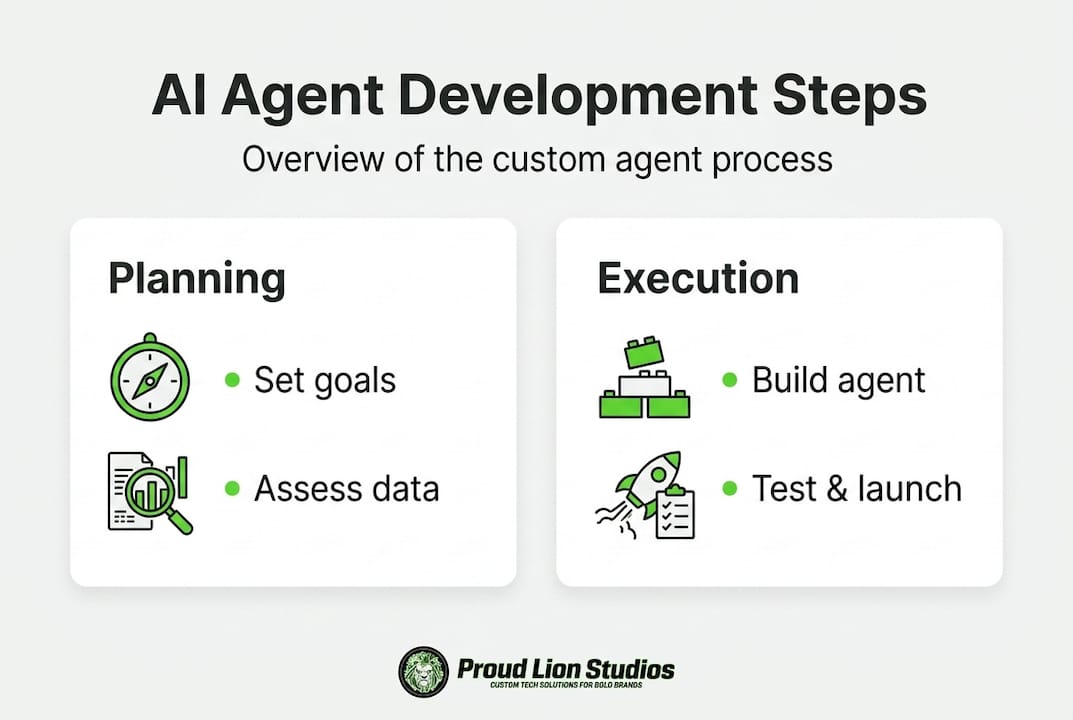

Custom AI agent development: A step-by-step guide

Manual, repetitive processes quietly drain revenue, slow teams down, and introduce errors at scale. If your operations still rely on human effort for tasks like data routing, report generation, or customer triage, you are leaving measurable efficiency gains on the table. Custom AI agents are not generic chatbots. They are purpose-built software entities that perceive inputs, reason over context, and take actions autonomously within your specific business environment. This guide walks you through every stage of developing one, from identifying where automation delivers real value to deploying, monitoring, and scaling agents that perform reliably in production.

Table of Contents

- Assessing business needs and prerequisites

- Designing agent architectures and selecting tools

- Implementing your custom AI agent

- Monitoring, scaling, and iterative improvement

- Editorial perspective: Why governance and 'agentish' design win long-term

- Explore custom AI agent solutions with Proud Lion Studios

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Prioritize governance | Effective custom AI agent development depends on strong governance and controlled autonomy. |

| Mitigate risk proactively | Guardrails, human oversight, and adversarial testing help prevent costly failures and infinite loops. |

| Continuous monitoring is crucial | Ongoing performance tracking and drift detection maintain agent reliability post-launch. |

| Start with bounded autonomy | Begin with controlled, agentish systems before moving to fully autonomous agentic designs. |

Assessing business needs and prerequisites

Before writing a single line of code, you need a clear map of where custom AI agents will actually move the needle for your organization. Starting with technology instead of business problems is one of the most expensive mistakes teams make. The right question is not "What can AI do?" but "Where are our biggest operational drags, and can an agent reliably own those tasks?"

Start by auditing your workflows. Look for processes that are:

- High-frequency and rule-heavy: Invoice processing, compliance checks, ticket classification, and data validation are ideal targets.

- Time-sensitive but predictable: Processes where delays cost money but the logic does not change much day to day.

- Error-prone under human fatigue: Tasks where humans make more mistakes late in the day or under volume pressure.

- Well-documented: Agents learn from data and instructions. If your process is undocumented or highly subjective, it is harder to automate reliably.

Once you have identified candidates, define clear, measurable goals. "Improve efficiency" is not a goal. "Reduce invoice processing time from 4 hours to 20 minutes with a 99% accuracy rate" is a goal. This specificity matters because it gives your team a yardstick and forces honest conversations about what the agent must actually do.

Next, assess your data and integration landscape. Custom agents require access to clean, structured data sources, APIs, or internal systems. If your CRM, ERP, and communication platforms do not expose reliable APIs, plan for that integration work upfront. Governance is equally critical from day one. Define who owns agent outputs, what happens when an agent is uncertain, and how human escalation works.

The role of AI in business efficiency extends across departments, but only when the automation is grounded in clear operational goals. The biggest trap is evaluating agents purely on benchmark performance. Research shows that production success requires evaluating full system behavior rather than isolated benchmarks, with continuous monitoring and drift detection built in from the start. Ignore orchestration risks and governance and you face an over 40% failure risk across agent projects.

| Evaluation factor | What to assess |

|---|---|

| Process repeatability | Can the task be described in clear, consistent rules? |

| Data availability | Is structured, accessible data available at scale? |

| Integration readiness | Do target systems offer stable APIs or connectors? |

| Governance framework | Who owns outputs and escalation decisions? |

| Success metrics | What measurable KPIs define a successful agent? |

Pro Tip: Run a one-week "shadow audit" where a team member logs every manual step they perform on a target process. That log becomes your agent specification.

Designing agent architectures and selecting tools

With your business requirements locked in, architecture design is where ambition meets reality. Choosing the wrong architecture is the number one reason AI agent projects stall after an exciting prototype phase. The core decision comes down to how much autonomy you actually need versus how much operational risk you can tolerate.

There are three broad architectural categories to consider:

- Rule-based agents: Operate on explicit conditional logic. Fast, predictable, and easy to audit. Best suited for highly structured tasks where edge cases are rare and well-understood.

- Agentish (bounded autonomy) agents: Use large language models or ML models to handle variability, but operate within constrained action spaces and require human checkpoints for high-stakes decisions. This is the sweet spot for most enterprise use cases in 2026.

- Fully agentic systems: Operate with broad autonomy, self-directed planning, and dynamic tool use. Powerful on paper, but high-risk in production. These systems require exceptional orchestration, extensive testing, and mature governance before they should touch any business-critical process.

Forrester's research on the complexity gap between Gen AI and AI agents delivers a clear warning: fully agentic approaches introduce compounding errors and are far harder to govern than their bounded counterparts. Gartner forecasts 40%+ project cancellations by 2027, largely driven by underestimated costs and risks in over-engineered agent designs. The message is direct: prefer bounded autonomy and establish governance before you expand capability.

For most teams, starting with an "agentish" design means the agent handles reasoning and decision-making within a defined, auditable action set. It can retrieve data, draft responses, or trigger workflows, but a human or a secondary validation layer reviews anything consequential. You expand that action space incrementally as confidence builds.

On the toolkit side, your options in 2026 are mature and varied:

| Toolkit | Best fit | Key strength |

|---|---|---|

| LangChain / LangGraph | Custom reasoning chains | Flexibility and ecosystem support |

| AutoGen | Multi-agent collaboration | Agent-to-agent communication |

| CrewAI | Role-based agent teams | Simple multi-agent orchestration |

| OpenAI Assistants API | Rapid deployment | Native tool use and file access |

| Custom builds | Proprietary systems | Maximum control and IP ownership |

Review AI agent examples across industries before finalizing your stack, since real-world deployment patterns reveal constraints that documentation rarely covers. For specialized implementations, working with dedicated AI agent solutions partners accelerates the architecture phase significantly.

Pro Tip: Before committing to any toolkit, build a minimal proof-of-concept with your actual data and integration targets, not synthetic data. Synthetic tests almost always overestimate real-world performance.

Implementing your custom AI agent

With architecture and tools selected, implementation is where precision separates successful deployments from costly restarts. Follow a structured, stage-gated process to reduce risk at every step.

- Define the agent specification in detail. Document every action the agent can take, every tool it can call, every data source it can access, and every decision it must escalate. Ambiguity at this stage becomes bugs in production.

- Build the core reasoning loop. Implement the perception, reasoning, and action cycle with your chosen framework. Keep the initial action space narrow, then expand it.

- Integrate with business systems. Connect your agent to APIs, databases, and communication channels. Use sandbox or staging environments first. Never connect a new agent to production data without extensive validation.

- Implement layered guardrails. Add input filtering to block malicious or malformed inputs, PII redaction to protect sensitive data, and output validation to catch hallucinated or out-of-range responses before they reach users or downstream systems.

- Run adversarial testing. Deliberately try to break the agent. Push edge cases: long inputs, contradictory instructions, rapid-fire requests, and injection attempts. Real-world edge cases include infinite loops, context overflow, tool failures, hallucinations, prompt injection, and non-deterministic outputs.

- Deploy with human-in-loop review. For the first weeks in production, route all agent outputs through a human review queue. This is your real-world test environment and your fastest learning cycle.

- Iterate based on real behavior. Collect data on agent decisions, errors, and escalations. Use that data to refine prompts, expand or constrain action spaces, and update guardrails.

"Mitigate edge case risks with iteration limits, layered guardrails including input filtering and PII redaction, human-in-loop checkpoints, idempotent tool design, and exponential backoff retries for failed tool calls."

Two errors deserve special attention. First, teams routinely underestimate prompt injection risk. A user or upstream system can craft inputs that hijack agent behavior. Defense requires input sanitization and strict system prompt separation. Second, tool failures cascade. If your agent calls three external APIs and one fails silently, the agent may continue reasoning on incomplete data. Design every tool call to fail loudly and handle retries with exponential backoff.

For teams building customer-facing agents, explore conversational AI solutions that include pre-built guardrail frameworks, saving significant implementation time. Equally important, embed AI ethics review into your implementation checklist, not as an afterthought but as a gate before launch.

Pro Tip: Create a dedicated "red team" session with two to three team members whose only job is to break the agent before it goes live. The issues they surface will be far cheaper to fix before deployment than after.

Monitoring, scaling, and iterative improvement

Deploying your agent is not the finish line. It is the starting point of an ongoing operational discipline. Agents that perform well in week one can drift significantly by week eight as data distributions shift, user behavior changes, or external APIs evolve. Building a monitoring framework before launch, not after, is what separates stable production agents from costly maintenance burdens.

Key monitoring practices to implement from day one:

- Behavioral logging: Log every agent decision, input, output, and tool call. This data is your primary diagnostic tool when something goes wrong.

- Performance dashboards: Track task completion rates, error rates, escalation rates, and latency in real time. Set alert thresholds so your team knows immediately when something deviates.

- Drift detection: Monitor statistical distributions of inputs and outputs over time. If the agent starts producing responses that look different from its validated baseline, that is a signal to investigate before users notice.

- Feedback loops: Build mechanisms for human reviewers and end users to flag incorrect outputs. Every flag is a labeled training example for your next improvement cycle.

- Compliance and audit trails: Especially in regulated industries, maintain immutable logs of agent decisions with timestamps and context. This is non-negotiable for financial services, healthcare, and legal applications.

Research confirms that production success depends on evaluating full system behavior, not just individual model performance. A model that scores well in isolation can fail systematically when it interacts with real users and live integrations. Your monitoring framework must capture the full system, not just the model layer.

For scaling, adopt a horizontal expansion model. Prove the agent in one business unit or workflow before rolling it out organization-wide. Each expansion introduces new data patterns, new integration points, and new user behaviors. Treat each as a mini-deployment with its own validation cycle. Review AI agents for business automation case studies to understand how leading organizations structure phased rollouts.

| Scaling stage | Key action | Success indicator |

|---|---|---|

| Pilot (1 team) | Validate accuracy and guardrails | Error rate below target threshold |

| Expansion (1 department) | Integrate additional data sources | Stable latency under higher volume |

| Enterprise rollout | Governance and compliance review | Audit trail completeness |

| Cross-unit deployment | Modular agent reuse | Reduced setup time per new unit |

The trajectory of AI in software development points clearly toward organizations that build strong operational foundations early outpacing those that scale fast without governance. Speed without structure creates technical debt that is far more expensive to unwind than it was to avoid.

Editorial perspective: Why governance and 'agentish' design win long-term

The loudest voices in the AI agent space push for maximum autonomy. Move fast, give the agent more tools, expand its action space, let it plan and execute without friction. We think that framing is exactly wrong for most business contexts, and the data backs us up.

Forrester's analysis on the complexity gap makes a critical point: fully agentic systems compound errors in ways that are genuinely hard to detect until the damage is done. One bad tool call triggers a flawed reasoning step, which triggers another bad action. By the time a human reviews the output, the agent has taken five wrong turns.

Agentish design avoids this by design. You keep the action space bounded, require human checkpoints for consequential decisions, and expand autonomy incrementally as confidence is earned through real-world performance. This is not caution for its own sake. It is engineering discipline. Governance is what makes an AI agent trustworthy enough to own a business process. Read more about AI governance insights to build the framework your agents need from day one. Long-term success belongs to the teams that build for reliability, not just capability.

Explore custom AI agent solutions with Proud Lion Studios

Building a production-grade AI agent is a multi-disciplinary challenge that spans software engineering, machine learning, governance, and business process design. Getting each layer right requires experience across all of them working together.

Proud Lion Studios brings end-to-end expertise to custom AI agent services, from initial workflow audits and architecture design through deployment, monitoring, and iterative refinement. Our UAE-based technical team works with startups and enterprises across multiple industries, delivering agents that are purpose-built for your operations rather than adapted from generic templates. We also integrate AI solutions with blockchain development solutions where trust, auditability, and decentralized logic add genuine business value. Reach out to start a conversation about where custom AI agents can deliver measurable results for your team.

Frequently asked questions

What are 'agentish' AI systems, and why are they preferred?

'Agentish' systems are AI agents that operate with controlled, bounded autonomy, which makes them more reliable and easier to govern than fully autonomous agents. Most enterprise teams prefer them because they reduce the risk of compounding errors in production.

How can you prevent AI agents from entering infinite loops or making critical errors?

Set iteration limits, implement layered guardrails including input filtering and PII redaction, require human-in-loop review for high-stakes actions, and run adversarial testing before every production deployment.

What causes high failure rates in AI agent projects?

Gartner forecasts 40%+ project cancellations by 2027, driven by cost overruns, ignored orchestration risks, and poor governance. Teams that skip system-level evaluation in favor of benchmark scores are most at risk.

How should custom AI agent projects be monitored post-deployment?

Continual behavioral logging, drift detection, real-time performance dashboards, and governance audit trails are essential for ensuring agent reliability and compliance as your business needs evolve.